Online isn’t so good when you want learners to interact – or is it?

It’s a familiar concern – while learning online has some advantages, it’s just not that good at enabling the kind of social interaction that can be important for learning. Face to face learning, or blended learning where there is some form of physical interaction are commonly held to outperform online learning. Online learning is thought to limit interaction between learners, and this, in turn, is often thought to negatively impact their learning outcomes.

But what if it isn’t quite so simple? In our new book – Digital Technology in Capacity Development: Enabling Learning and Supporting Change – we take six common assumptions about digital learning, and interrogate them with data gathered from ten years of work supporting 20,000 professionals from across the Global South.

Read on for more ideas about how to encourage participation online, and watch the webinar to learn from our expert panel: Beyond content delivery: How to make online learning truly participatory?

How we encourage interaction

In recent years we’ve used a range of different approaches to making learning more interactive, by encouraging interaction between participants and facilitators in our online courses. These include asynchronous approaches and synchronous approaches, for example:

- Facilitated forums, in which courses facilitators respond to participants questions, pose their own, or encourage discussion within the group.

- Peer assessment, where participants provide feedback on each other’s work.

- Group‐work, where participants are assigned to different groups and asked to collaborate on an assignment.

- Mentoring, where individuals are mentored by more experienced researchers, or where peers mentor each other and learn together.

- Live drop‐in clinics, to enable participants to ask questions, discuss particular issues, or to get help dealing with specific problems.

- Live text‐based chats or video sessions, where participants come together at specific times to hear from a guest speaker or panel, or discuss issues together, using tools like WhatsApp or Zoom.

The aim in all of these approaches – whether support comes from expert facilitators or peers– has been to make learning social, by encouraging participants to interact with each other as they study, and to use a course to create a community of learners.

While there’s no evidence to suggest that outcomes were less positive when interaction was more limited, directly encouraging interaction between participants seems to secure higher completion rates. This is perhaps because it keeps participants motivated and interested, and helps to overcome the isolation that online learning can sometimes bring. Nine in 10 participants in a recent MOOC on scientific research writing told us the level of support and guidance we offered was enough to ensure they could complete the course successfully.

Feedback helps participants apply their learning

We’ve also found that learners have found studying online to be positive experience in its own right, and that the opportunities for interaction have played an important role.

For example, in one course (for Southern academics who were also journal editors) each participant was asked to create an action plan, and then got feedback from the facilitators later. That level of interaction was much easier to do with a course spread over several weeks, than it would have been in an intensive face-to-face workshop, and 95% rated the feedback as useful.

And across four recent MOOCs on research writing, 30%‐43% rated feedback provided via peer assessments 5 out of 5, and 72%-80% said it was either ‘very’ or ‘somewhat’ useful. Participants report that giving feedback is also a useful learning experience, because it helps them to apply what they’ve learnt.

Facilitation makes a difference

We know that facilitation makes a difference because we see what changes where it’s missing. We’ve made several of our courses available as self-study resources, so that they can be accessed flexibly outside of our facilitated course programme. While feedback is good, completion rates are around half of what we see in moderated or facilitated courses. Even relatively light moderation or facilitation (for example, sending weekly announcements about upcoming course activities and reminders about deadlines) can have a positive impact on completion rates.

Something that learners seem to particularly value – and which is much more pronounced in online courses – is the opportunity to interact with peers and facilitators from a wide range of countries. That was particularly the case in our large-scale MOOCs, which involve up to 3,500 learners in a single course, or our “journal clubs” which are typically several hundred to a thousand strong.

But there are also times when limiting groups size is better. We’ve found that where participants face specific barriers, either with a country or at the institution level, then bespoke training with more limited participation is more effective. The important thing is to assess it on a case-by-case basis.

Five factors that encourage interaction

So why do we think our results differ from what’s reported in the wider literature? We’ve identified five factors that we think are significant.

- In our MOOCs, we draw facilitators from a global community. This has ensured high levels of interaction in the discussion forums

- We encourage group work and each of our courses has some kind of authentic assessment, and a rubric to facilitate peer-review

- Our courses are mostly asynchronous, to increase flexibility and inclusion, but we organise live sessions to create a feeling of presence among the learners and facilitators

- We aim to use tools that people are comfortable or already familiar with (for example Zoom is widely used in many countries we work with, Teams much less so) and we try not to add more than one new or less familiar tool per course. While we are keen to introduce people to how they might do things differently with technology, we do not want to prioritise technology over learning

- In our self-study tutorials we build “facilitator voice” into the content by developing reflective exercises and providing examples of solutions and commentaries.

Case study: the impact of light facilitation on learning outcomes in our critical thinking course

In 2021, we launched our critical thinking course both as a self-study tutorial and in a light facilitated version. Facilitation involved supporting participants through announcements about their expected learning progress once or twice a week, answering technical questions about the learning platform and encouraging participants to share their ideas and questions in a content-related discussion forum. Facilitation was mainly restricted to keeping an eye on the posts to make sure that discussions were respectful and relevant. Then we compared feedback data for the two courses. Participants in the facilitated version of the course reported slightly more positive outcomes than those who completed the self-study version. However, what is more interesting is that those who began the facilitated course were almost twice as likely to complete it, compared with those who undertook the self-study version. So, there is some evidence that the presence of interaction may secure higher completion rates, perhaps by motivating participants or sustaining their interest.

Join us to learn more – Beyond content delivery: How to make online learning truly participatory?

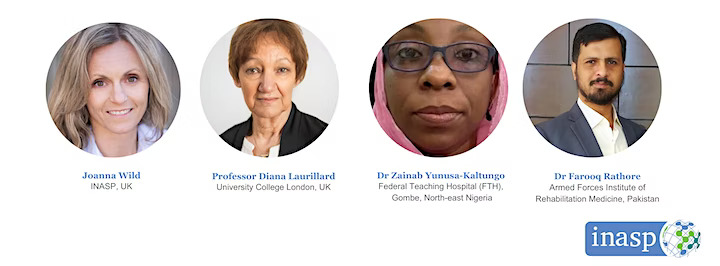

If you’re interesting in hearing more, join us on 31st March at 12.00 BST for the first of the series of discussions where we’ll explore at these issues in more depth with a series of expert speakers.

Watch the recording here: Beyond content delivery: How to make online learning truly participatory?

There are two further webinars in the series:

25 April, 12.00 BST: How can we design online learning to compensate for digital infrastructure constraints?

Panellists:

- Shanali Govender, Centre for Innovation in Teaching and Learning, University of Cape Town, South Africa

- Dr Kendi Muchungi, Brain and Mind Institute, Aga Khan University, Kenya

- Professor Prof Dilshani Dissanayake, Research Promotion & Facilitation Centre, Faculty of Medicine, University of Colombo, Sri Lanka

16 May, 12.00 BST: How can gender-responsive pedagogy inform the design of online courses?

Panellists:

- Dr Nicola Pallitt, Centre for Higher Education Research, Teaching and Learning, Rhodes University, South Africa

- Dr Funmilayo Doherty, Yaba College of Technology, Lagos, Nigeria

- Dr Albert Luswata, Uganda Martyrs University, Uganda.

Previous Post

Previous Post Next Post

Next Post